Tagged

#AI

7 posts

-

Claude Design: Anthropic's Natural-Language Pitch Deck and Mockup Generator

Anthropic launched Claude Design on April 17, 2026 — an experimental product powered by Claude Opus 4.7 that turns natural-language prompts into prototypes, slides, pitch decks, mockups, and one-pagers. It exports to Canva, PDF, PPTX, standalone HTML, and a Claude Code handoff bundle, and can ingest your codebase or design files to auto-build a reusable design system. This post covers the core features, subscription tiers, positioning against Figma, Canva, Google Stitch, v0, and Lovable, why Figma's stock dropped 7% on launch day, and the situations where Claude Design is not the right tool.

-

Claude Code /passes: Gift 7-Day Claude Pro Trials and Earn Referral Credits

Claude Code added a /passes command in December 2025 that lets Claude Pro Max subscribers send three 7-day Claude Pro trial passes to friends. Each invitee who converts to a paid Pro plan earns the sender a $10 Claude credit plus extra usage. This post walks through how /passes works, the conditions for both sender and recipient, how it differs from claude.ai gift subscriptions, and a few details Anthropic hasn't fully documented.

-

Claude Opus 4.7: Coding Upgrades, Pricing, Context, and the Mythos Backstory

Anthropic shipped Claude Opus 4.7 on April 16, 2026 as its new flagship. This piece walks through the coding and vision upgrades (CursorBench jumps from 58% to 70%, Rakuten SWE-Bench solves 3× more), how 4.7 compares to 4.6 on price, context, and features, and the unusual decision Anthropic openly disclosed: Opus 4.7 is a deliberately capability-reduced sibling of the still-gated Mythos Preview model — the reasoning behind that is worth a closer look.

-

Claude Extra Usage Credit: How to Claim Free Credits on Pro, Max, and Team Plans

Anthropic is giving Claude Pro, Max, and Team subscribers a limited-time Extra Usage Credit in April 2026 — up to $200 in free credit, redeemable across Claude, Claude Code, and Claude Cowork. This guide covers how much each plan gets, who qualifies, step-by-step instructions to enable Extra Usage and click Claim, plus the 90-day expiration and other fine print you need to know before the April 17 deadline.

-

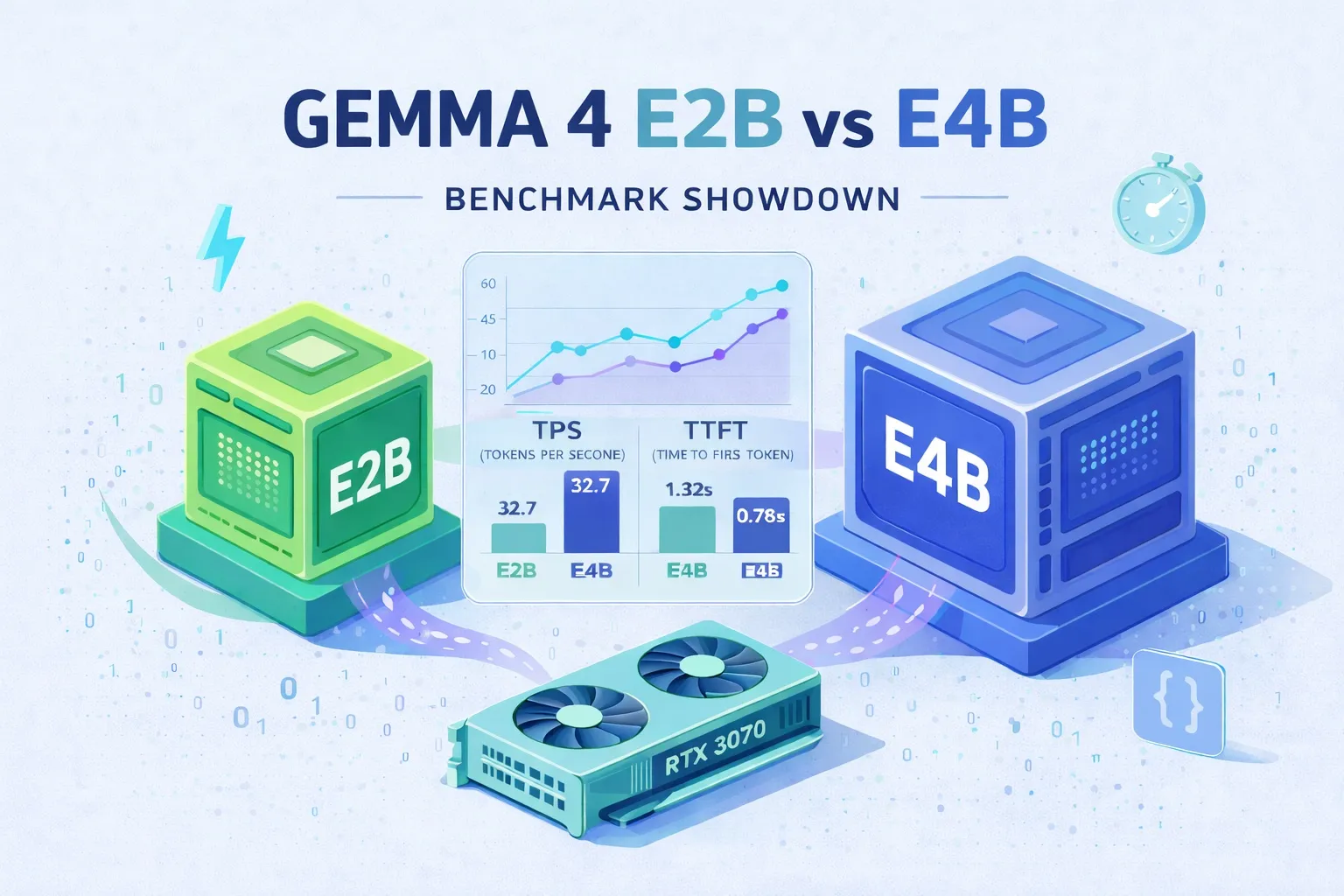

Gemma 4 E2B vs E4B Benchmark: The Hidden Thinking Mode That Makes the Smaller Model 20× Slower

A hands-on RTX 3070 benchmark of Gemma 4 E2B and E4B: TPS, TTFT, quality on hard and practical tasks, plus a deep dive that traces E2B's mysterious slowdown to an <|think|> token that Ollama's gemma4 renderer injects by default — contradicting the docs. Ends with best-practice presets and a note on running Claude Code locally.

-

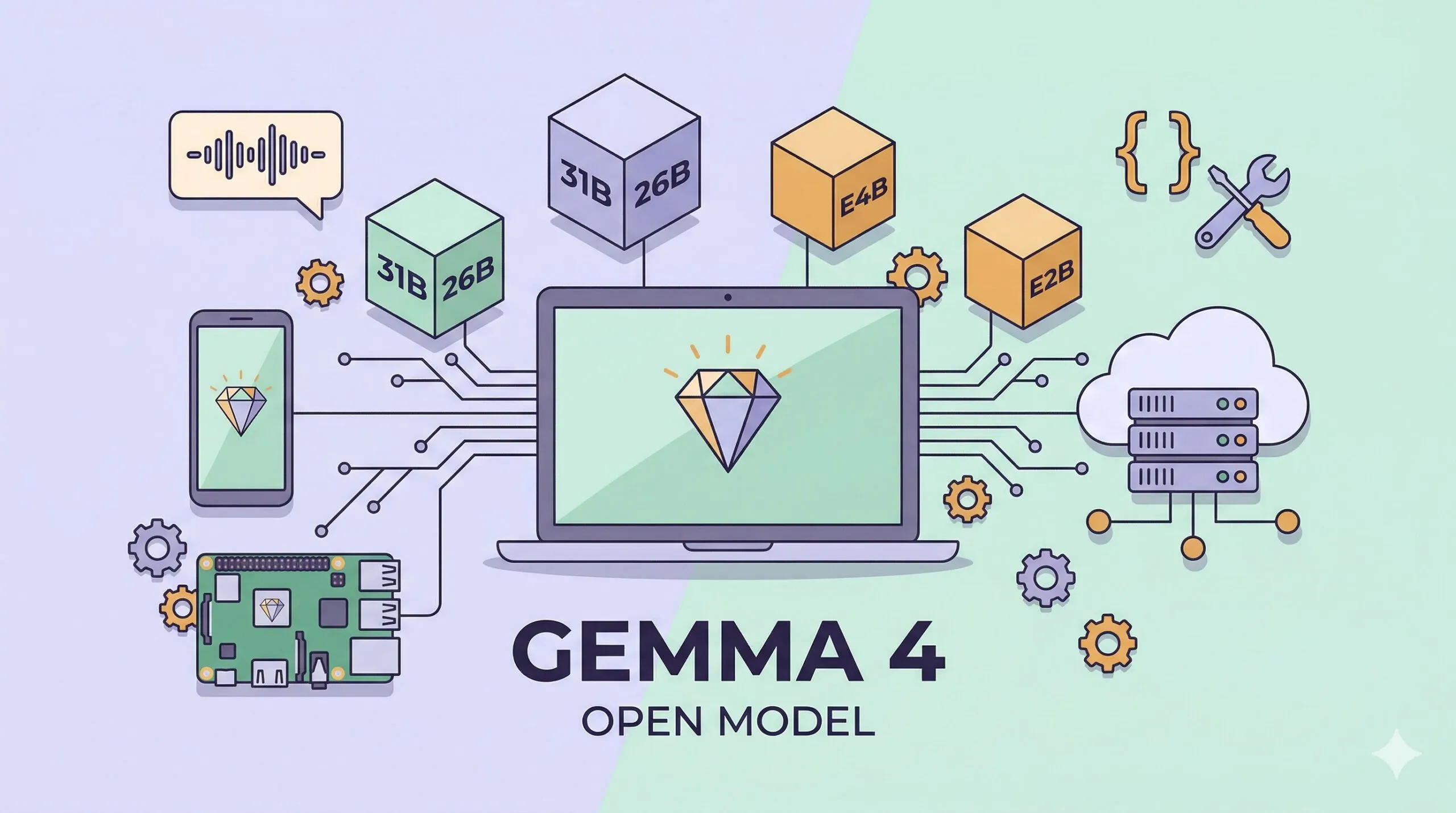

Google Gemma 4: Open-Source, Multimodal, Apache 2.0 — and 1.5GB to Run on a Phone

Google DeepMind's Gemma 4 is an open-weight model family under Apache 2.0, with four sizes (31B Dense, 26B MoE, E4B, E2B), native multimodal input, and edge deployment down to 1.5GB of memory. A hands-on tour of the benchmarks, the new Agent Skills story, and every way to run it — from your phone to a workstation.

-

Ollama Tutorial: Run Local LLMs on Windows, Linux, and macOS

A beginner-friendly walkthrough of Ollama — the easiest way to run open-source large language models on your own machine. Covers installation on Windows, Linux, and macOS, running your first model, choosing a model size for your hardware, and calling Ollama from Python or any REST client.