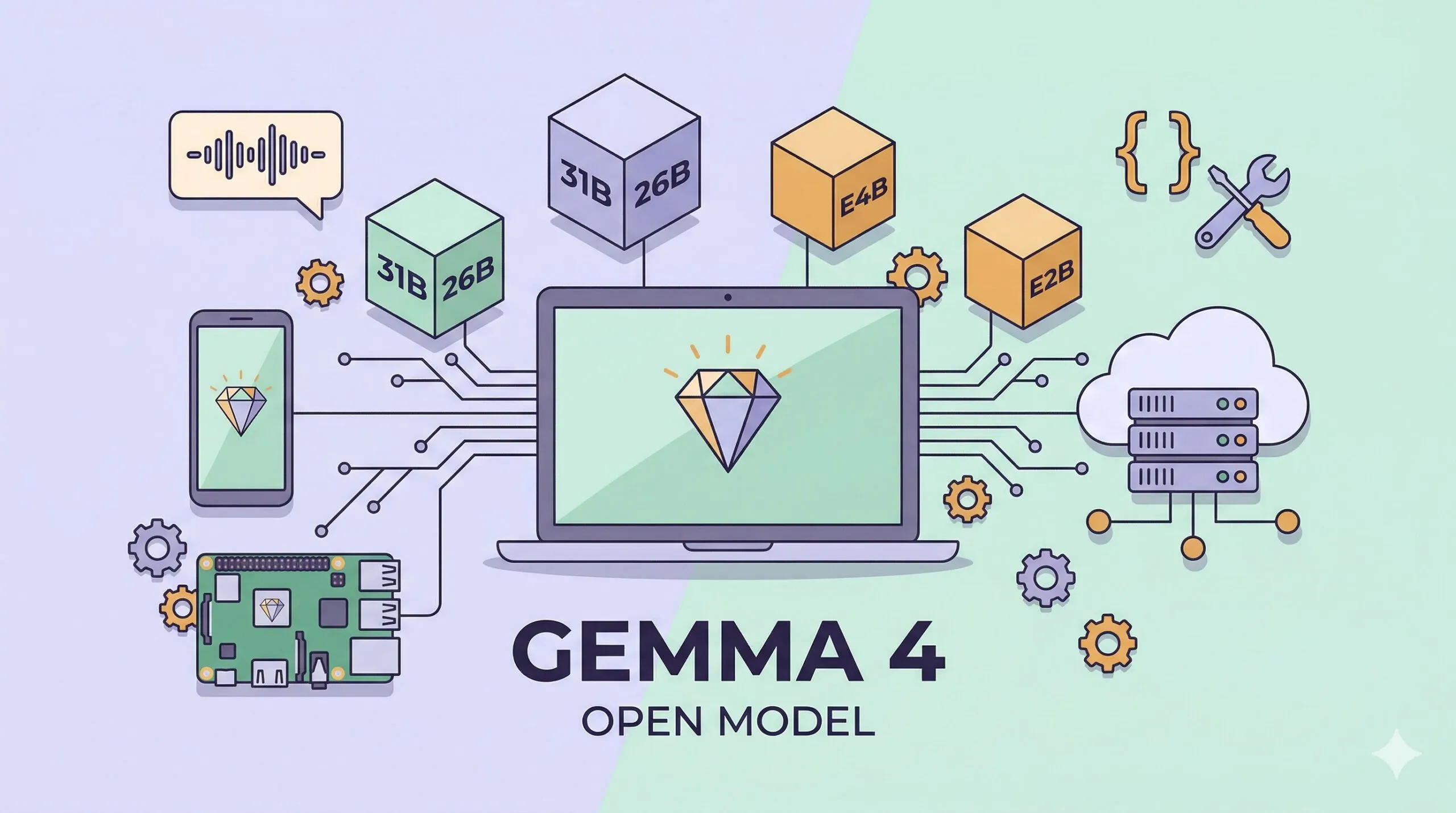

Google Gemma 4: Open-Source, Multimodal, Apache 2.0 — and 1.5GB to Run on a Phone

Open-source AI is getting crowded. Meta has Llama, Alibaba has Qwen, Mistral keeps shipping, DeepSeek is nipping at the heels of frontier labs. On April 2, 2026, Google DeepMind jumped into the mix with Gemma 4 — a family of open-weight models derived from Gemini 3 research, and this time released under the genuinely permissive Apache 2.0 license. No MAU cap, no acceptable-use appendix, no catches.

The pitch is direct: “byte for byte, the most capable open models.” It is not entirely marketing. The 31B Dense variant sits at third place among open models on the Arena AI text leaderboard, and the 26B MoE version reaches sixth while activating just 3.8B parameters per token. The smallest variant, E2B, runs multimodal inference in under 1.5 GB of memory — small enough for a phone, a Raspberry Pi, or a mid-range IoT device.

This post walks through the whole release: model lineup, benchmarks, multimodal capabilities, Agent Skills, and every way to actually run it on hardware you own.

What is Gemma 4?

Gemma is Google DeepMind’s open-weight model family, technically descended from their flagship Gemini line. Gemma 3 shipped in 2025 and was well received. Gemma 4 is the next step, rebuilt on research from Gemini 3 with meaningful architectural and training improvements.

The release ships four sizes that span everything from laptops and workstations down to phones:

- 31B Dense — the full 31-billion-parameter dense model. Highest quality, best for tasks that need maximum reasoning ability.

- 26B A4B MoE — 26 billion total parameters, but only 3.8 billion active per token thanks to a Mixture of Experts architecture. Gets close to the 31B’s quality at a fraction of the inference cost.

- E4B — “E” stands for effective. Total 8B parameters (including embeddings), ~4.5B active. The mid-range edge model.

- E2B — 5.1B total, ~2.3B active. The lightest variant — this is the one that fits in 1.5 GB. IoT and smartphone first.

Every size ships in both base and instruction-tuned (IT) flavors, and the weights are available on Hugging Face, Kaggle, and the Ollama library.

Spec sheet

| Model | Effective params | Total params | Layers | Context window | Modalities |

|---|---|---|---|---|---|

| E2B | 2.3B | 5.1B | 35 | 128K | Text · Image · Audio |

| E4B | 4.5B | 8B | 42 | 128K | Text · Image · Audio |

| 26B A4B MoE | 3.8B (active) | 26B | — | 256K | Text · Image · Video |

| 31B Dense | 31B | 31B | 60 | 256K | Text · Image · Video |

A few architectural ideas worth highlighting:

- Per-Layer Embeddings (PLE) — every layer gets its own embedding injection, creating a parallel conditioning path alongside the main residual stream. Helps the model handle multimodal inputs more cleanly.

- Shared KV Cache — later layers reuse the Key/Value tensors from earlier ones, slashing memory and compute for long-context inference.

- Hybrid attention — alternates between sliding-window local attention and global full-context attention, balancing efficiency and reach.

- Variable image token budget — you can spend anywhere from 70 to 1,120 tokens on a single image, trading speed for fidelity.

Benchmarks

The headline number is the Arena AI text leaderboard: 31B Dense at 1452 Elo — third among open models. 26B A4B MoE at 1441 Elo — sixth, despite activating only 3.8B parameters at a time.

Below is the detailed comparison with last year’s Gemma 3 27B.

Reasoning and knowledge

| Benchmark | Gemma 4 31B | Gemma 4 26B A4B | Gemma 4 E4B | Gemma 4 E2B | Gemma 3 27B |

|---|---|---|---|---|---|

| MMLU Pro | 85.2% | 82.6% | 69.4% | 60.0% | 67.6% |

| AIME 2026 | 89.2% | 88.3% | 42.5% | 37.5% | 20.8% |

| GPQA Diamond | 84.3% | 82.3% | 58.6% | 43.4% | 42.4% |

| BigBench Hard | 74.4% | 64.8% | 33.1% | 21.9% | 19.3% |

Gemma 4 31B lands at 89.2% on AIME 2026, more than four times Gemma 3 27B’s 20.8%. Even E2B — a 2.3B-effective-parameter model — matches Gemma 3 27B on GPQA Diamond.

Code

| Benchmark | Gemma 4 31B | Gemma 4 26B A4B | Gemma 4 E4B | Gemma 4 E2B |

|---|---|---|---|---|

| LiveCodeBench v6 | 80.0% | 77.1% | 52.0% | 44.0% |

| Codeforces Elo | 2150 | 1718 | 940 | 633 |

Vision

| Benchmark | Gemma 4 31B | Gemma 4 26B A4B | Gemma 4 E4B | Gemma 4 E2B |

|---|---|---|---|---|

| MMMU Pro | 76.9% | 73.8% | 52.6% | 44.2% |

| MATH-Vision | 85.6% | 82.4% | 59.5% | 52.4% |

Multimodal capabilities

Every Gemma 4 size is multimodal, but the supported modalities vary by tier:

- Image understanding (all sizes) — variable aspect ratio and resolution, no forced square cropping. Good for object detection, captioning, OCR, and chart analysis.

- Video understanding (31B, 26B A4B) — multi-frame analysis, clips up to 60 seconds.

- Audio processing (E2B, E4B) — USM-style Conformer encoder, supports speech-to-text and spoken Q&A, up to 30 seconds of audio per request.

- Multilingual — pretraining covers 140+ languages; post-training covers 35+.

An interesting design choice: the larger models (31B, 26B) support video but not audio, while the smaller edge models (E2B, E4B) flip it and support audio but not video. That is because the killer use case on-device is voice interaction, while the big-model workloads are more likely to involve visual documents and clips.

Agent capabilities and function calling

Agentic workflows are a major focus of this release. Gemma 4 natively supports:

- Function calling — define tools, let the model decide when to invoke them, and receive properly formatted call requests.

- Structured JSON output — no grammar constraint or post-processing needed; the model emits valid JSON directly.

- Multi-step reasoning and planning — break complex goals into sequential steps and execute them.

- System instructions — first-class support for defining agent behavior and guardrails.

- Extended thinking — up to 4,000 tokens of “think longer” mode for harder problems.

Google is also pitching a new concept called Agent Skills — reusable capability bundles that let developers build fully offline autonomous workflows on the edge: extending a knowledge base, generating interactive content, chaining other models, all on-device without a network.

Actually running it on the edge

The most exciting thing about Gemma 4, for me, is the edge story. With 2-bit / 4-bit quantization, E2B runs in under 1.5 GB of memory. That means most modern smartphones — Android or iPhone — can run it.

Some numbers from the release:

- Raspberry Pi 5 running E2B: ~133 tokens/s prefill, ~7.6 tokens/s decode.

- With GPU acceleration, 4,000 input tokens plus two Agent Skills complete in under 3 seconds.

- 4× faster and 60% more energy-efficient than the previous generation.

Supported platforms: Android, iOS, Windows, Linux, macOS (Metal-accelerated), WebGPU browsers, Raspberry Pi, and Qualcomm QC8 NPU. Hardware-optimized paths are available on Google, MediaTek, and Qualcomm’s latest AI accelerators.

How to run Gemma 4

Plenty of entry points. Pick whichever matches your hardware.

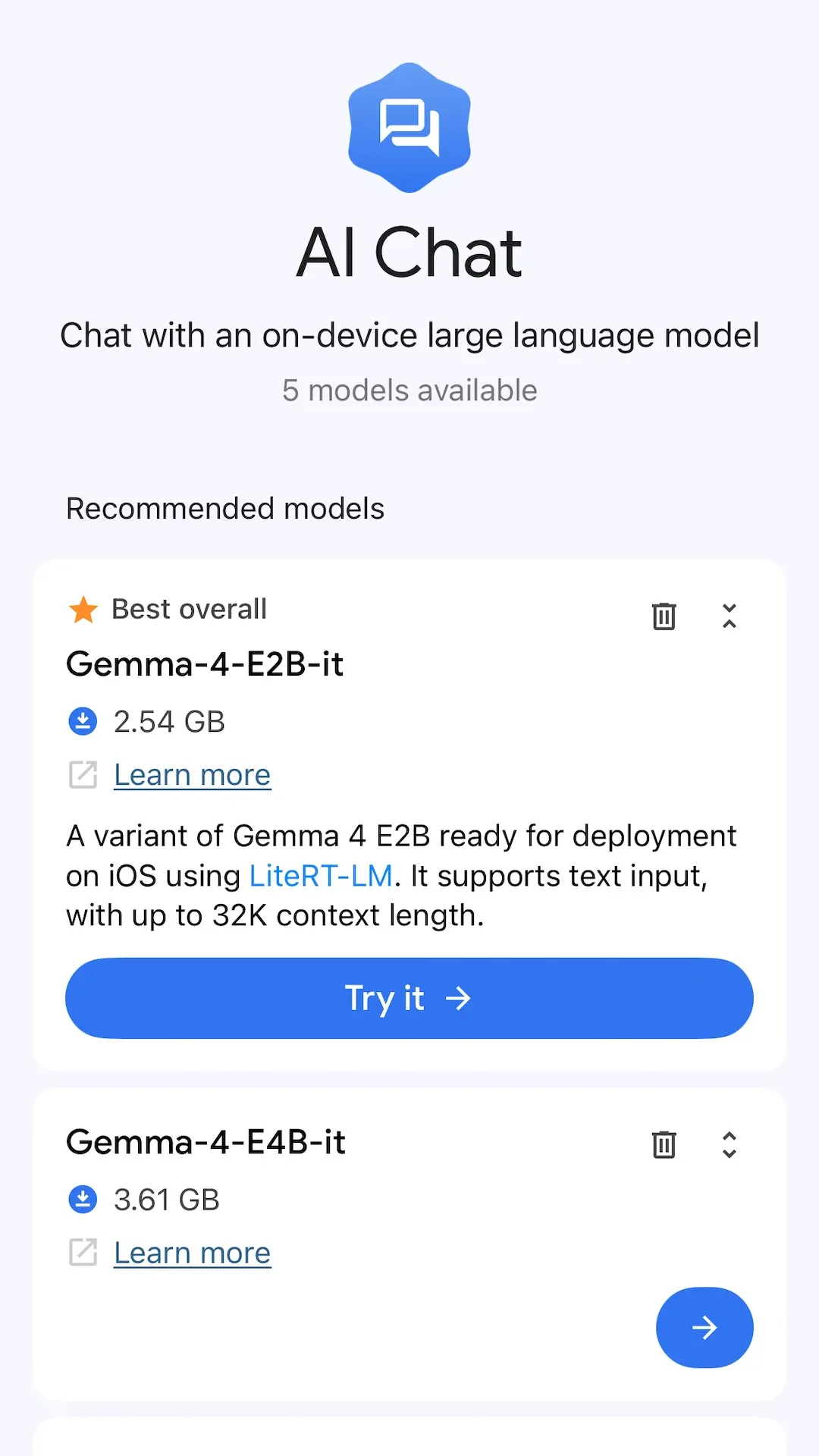

On your phone

The easiest way to get Gemma 4 on a phone is the Google AI Edge Gallery app — Google Play for Android and the App Store for iOS. It downloads E2B or E4B to your device, and everything runs offline. No code required.

Android developers have a second option: the AICore Developer Preview. That is Android’s system-level on-device AI service — your app calls an API and Gemma 4 runs in a shared runtime. The interesting part: E2B and E4B are the foundation of the future Gemini Nano 4, so code you write against Gemma 4 today will run unchanged on Gemini Nano 4 devices later.

On iPhone, besides AI Edge Gallery, you can also deploy via iOS builds of llama.cpp or Core ML-aware toolchains. Since E2B only needs 1.5 GB, recent iPhones have plenty of headroom.

One curious detail worth knowing about: the same E4B model is 3.61 GB on AI Edge Gallery but 9.6 GB on Ollama. That is almost a 3× difference. The reason is format and quantization precision. AI Edge Gallery uses Google’s LiteRT (previously TFLite), optimized for mobile GPUs and NPUs with aggressive 4-bit quantization. Ollama uses GGUF, designed for desktop CPUs and Apple Silicon, and defaults to a higher-precision quantization (typically Q4_K_M or better). The mobile version trades a bit of quality for smaller size and lower RAM use; the desktop version keeps more of the model’s detail at the cost of disk space.

Ollama

If you have not run a local model before, start with the Ollama tutorial to get the runtime installed. Once that is in place, one command boots any Gemma 4 size:

# E2B — the lightest, ideal for laptops and phones

ollama run gemma4:e2b

# E4B

ollama run gemma4:e4b

# 26B MoE — needs more RAM, but fast inference

ollama run gemma4:26b

# 31B Dense — highest quality, heaviest footprint

ollama run gemma4:31bMore info at ollama.com/library/gemma4.

llama.cpp

For finer control, llama.cpp + a GGUF quantization works well:

# macOS install

brew install llama.cpp

# Launch an OpenAI-compatible API server

llama-server -hf ggml-org/gemma-4-E2B-it-GGUF

# Pick a quantization level (Q4_K_M is the sweet spot)

llama-server -hf ggml-org/gemma-4-26b-a4b-it-GGUF:Q4_K_MMLX on Apple Silicon

Mac users can lean on Apple’s own MLX framework, which is specifically tuned for M-series chips:

# Install mlx-vlm

pip install -U mlx-vlm

# Multimodal inference — image + text

mlx_vlm.generate \

--model google/gemma-4-E4B-it \

--image photo.jpg \

--prompt "Describe this image"

# TurboQuant saves roughly 4× memory on the KV cache

mlx_vlm.generate \

--model mlx-community/gemma-4-26B-A4B-it \

--prompt "Explain quantum computing" \

--kv-bits 3.5 \

--kv-quant-scheme turboquantGoogle AI Studio

If you do not want to run anything locally, the 31B and 26B MoE models are hosted on Google AI Studio — no download, just open the site and start chatting. E2B and E4B can be tried through the Google AI Edge Gallery.

Hugging Face transformers

Python developers can load Gemma 4 directly through transformers:

from transformers import pipeline

# Quick start with pipeline

pipe = pipeline("any-to-any", model="google/gemma-4-e2b-it")

messages = [

{

"role": "user",

"content": [

{"type": "image", "image": "photo.jpg"},

{"type": "text", "text": "What is in this image?"},

],

}

]

output = pipe(messages, max_new_tokens=200)

print(output)Apache 2.0 — real open source

Worth calling out the license change explicitly. Gemma 3 shipped under Google’s custom Gemma Terms of Use — free, but with a handful of restrictions. Gemma 4 switches to Apache 2.0, which means:

- No monthly active user (MAU) cap.

- No additional acceptable-use-policy strings attached.

- Full commercial freedom.

- Modify, fork, and redistribute at will.

That puts Gemma 4 on the same footing as Mistral (also Apache 2.0), and arguably more permissive than Meta’s Llama (custom license, 700 million MAU cap). For enterprise teams that have been reluctant to build on Llama because of the MAU clause, this removes a real blocker.

Closing thoughts

Gemma 4 is a serious addition to the open-weight landscape. The 31B and 26B MoE go head-to-head with the best open models for raw reasoning, while E2B and E4B push multimodal AI all the way down to phones and IoT devices. Combine that with Apache 2.0, native agent tooling, and 140+ language coverage, and it becomes a model family worth seriously considering — whether you are building a chatbot, running RAG, doing code generation, or embedding offline AI in an Android app.

If you have not tried running open-source models locally yet, start with the Ollama tutorial. Once Ollama is installed, a single ollama run gemma4:e2b gets you there.

References

- Gemma 4: Byte for byte, the most capable open models — Google official announcement

- Gemma 4 — Google DeepMind — DeepMind model page

- Gemma 4 Model Card — Official specs and benchmarks

- Bring state-of-the-art agentic skills to the edge with Gemma 4 — Google Developers Blog, edge deployment deep dive

- Gemma 4: The new standard for local agentic intelligence on Android — Android Developers Blog

- Announcing Gemma 4 in the AICore Developer Preview — AICore Developer Preview announcement

- Welcome Gemma 4: Frontier multimodal intelligence on device — Hugging Face technical walkthrough

- Google announces open Gemma 4 model with Apache 2.0 license — 9to5Google coverage