Tagged

#Multimodal

1 post

-

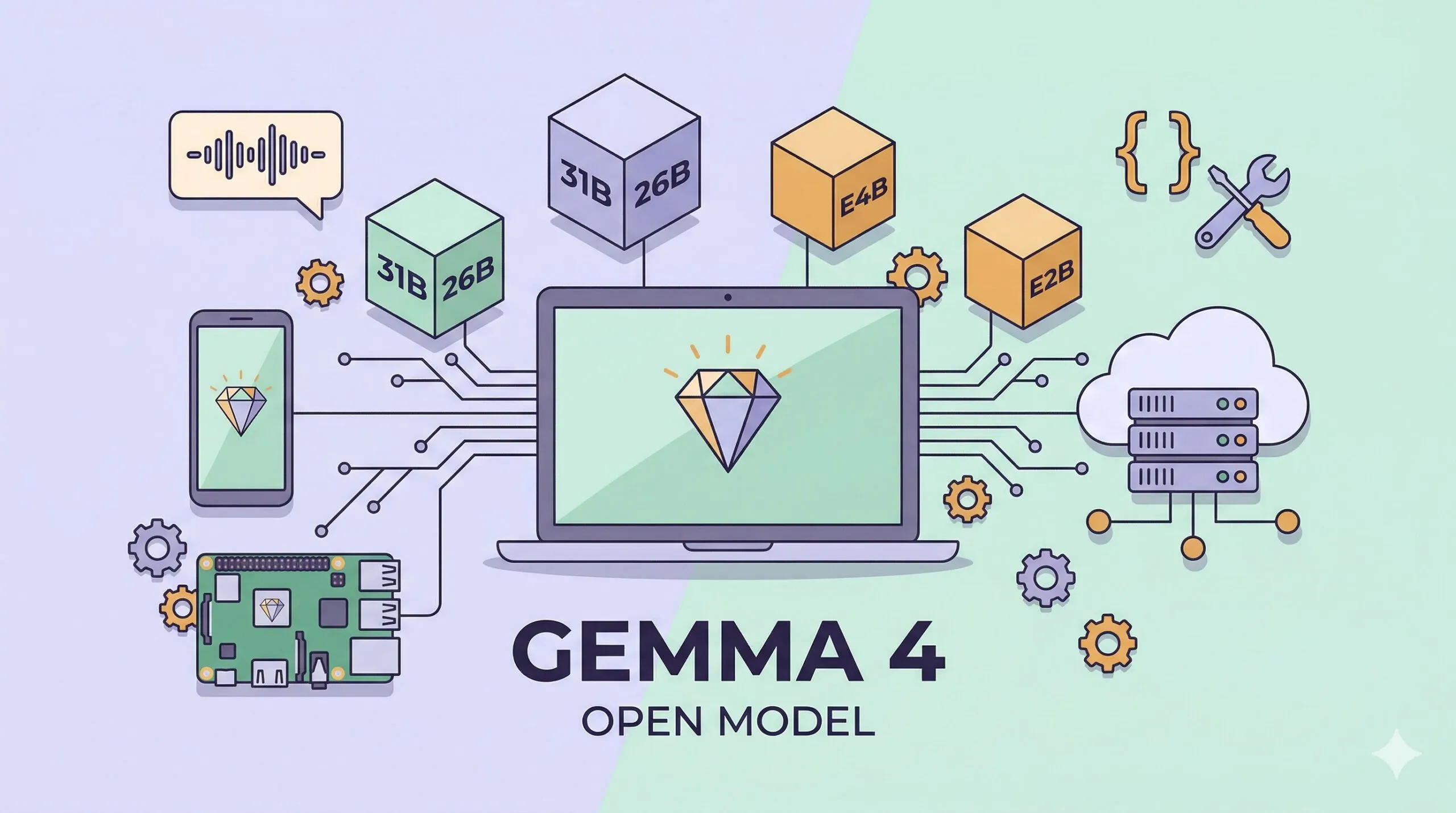

Google Gemma 4: Open-Source, Multimodal, Apache 2.0 — and 1.5GB to Run on a Phone

Google DeepMind's Gemma 4 is an open-weight model family under Apache 2.0, with four sizes (31B Dense, 26B MoE, E4B, E2B), native multimodal input, and edge deployment down to 1.5GB of memory. A hands-on tour of the benchmarks, the new Agent Skills story, and every way to run it — from your phone to a workstation.