Tagged

#Ollama

2 posts

-

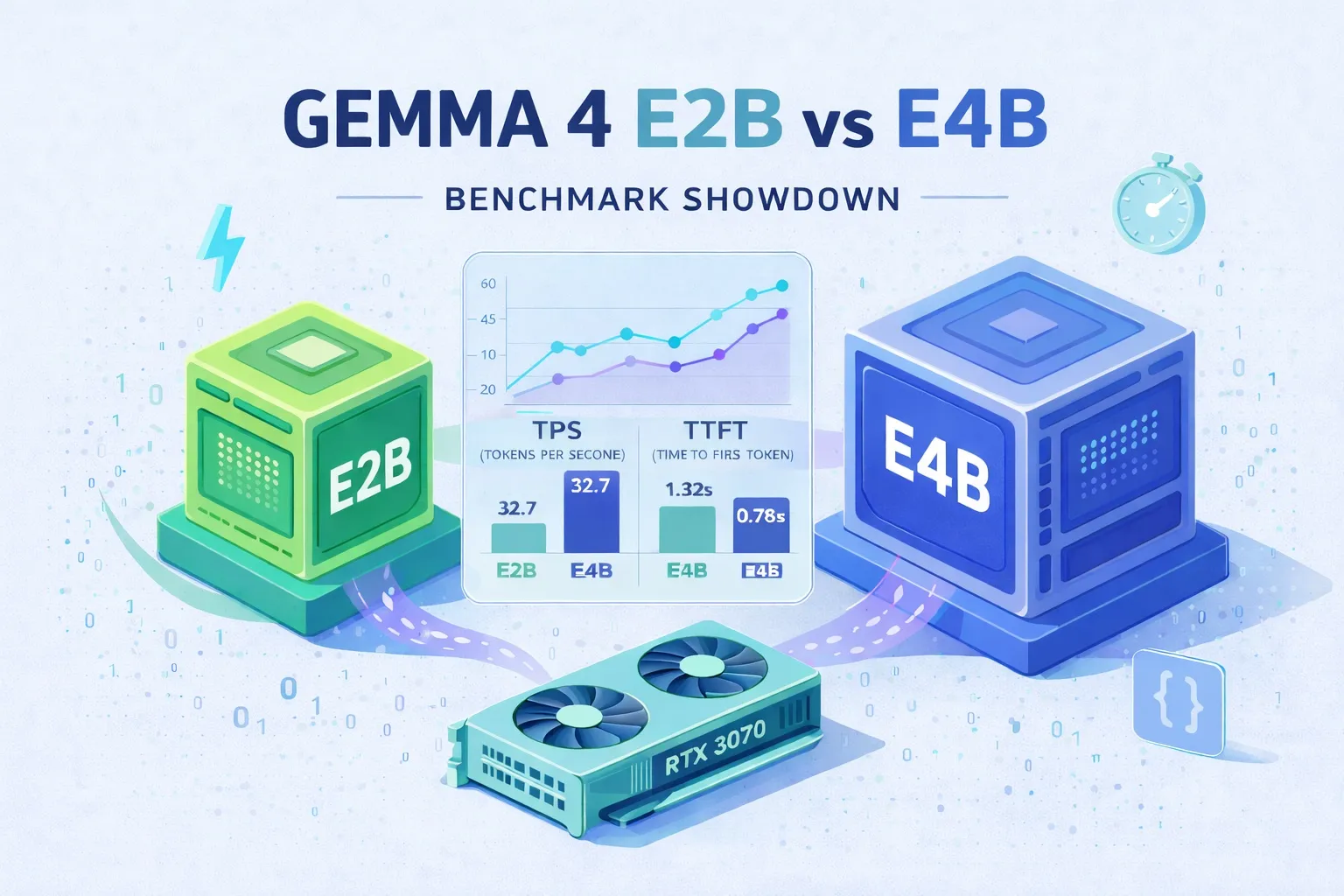

Gemma 4 E2B vs E4B Benchmark: The Hidden Thinking Mode That Makes the Smaller Model 20× Slower

A hands-on RTX 3070 benchmark of Gemma 4 E2B and E4B: TPS, TTFT, quality on hard and practical tasks, plus a deep dive that traces E2B's mysterious slowdown to an <|think|> token that Ollama's gemma4 renderer injects by default — contradicting the docs. Ends with best-practice presets and a note on running Claude Code locally.

-

Ollama Tutorial: Run Local LLMs on Windows, Linux, and macOS

A beginner-friendly walkthrough of Ollama — the easiest way to run open-source large language models on your own machine. Covers installation on Windows, Linux, and macOS, running your first model, choosing a model size for your hardware, and calling Ollama from Python or any REST client.