Nginx Rate Limiting Tutorial: limit_req, burst, and nodelay Explained

Run a website long enough and you’ll see weird traffic. The login endpoint suddenly takes hundreds of password attempts per second. A bot starts crawling your API at full speed with zero respect for robots.txt. Or a single buggy client falls into an infinite retry loop and eats every connection on the box. By the time your application code reacts, the CPU is already on fire — the requests never even made it to your code. Nginx’s built-in limit_req module exists for exactly this situation: a few lines of config in front of your app, and abusive traffic gets dropped at the edge while real users keep working.

The configuration looks short, but the concepts behind it aren’t obvious. burst, nodelay, and delay interact in non-trivial ways, and most copy-paste templates from the internet end up either too strict (real users hit 503) or too loose (effectively no limit). This guide walks through the leaky bucket algorithm, breaks down each parameter, compares the three common modes side-by-side, and finishes with production-grade configs.

Why You Need Rate Limiting

Rate limiting caps how many requests a single source can send in a unit of time. It looks superficially like a firewall, but the model is different. A firewall is allow/deny: a request matches a rule, it’s blocked or permitted. Rate limiting is quota-based: the first N requests pass, anything over the quota gets rejected. For users who aren’t malicious — just running buggy code — rate limiting is far friendlier than an outright IP ban. They get throttled for a moment and recover on their own.

Common places to put a rate limit:

- Login, signup, password reset — to defeat brute-force attacks and mass fake-account creation

- Public API endpoints — so a single client can’t consume the entire service’s capacity

- Expensive endpoints like search, file downloads, report generation — to stop someone from triggering them in a loop and dragging the system down

- The site’s overall front door — as the outermost layer, absorbing traffic spikes before they reach the backend

Rate limiting isn’t a silver bullet. Distributed crawlers (each IP sends only a handful of requests, but there are tens of thousands of IPs) bypass it completely — that’s where you bring in a WAF or Cloudflare-style edge service. But for the 90% case of everyday abusive traffic, a single layer of Nginx rate limiting absorbs most of it.

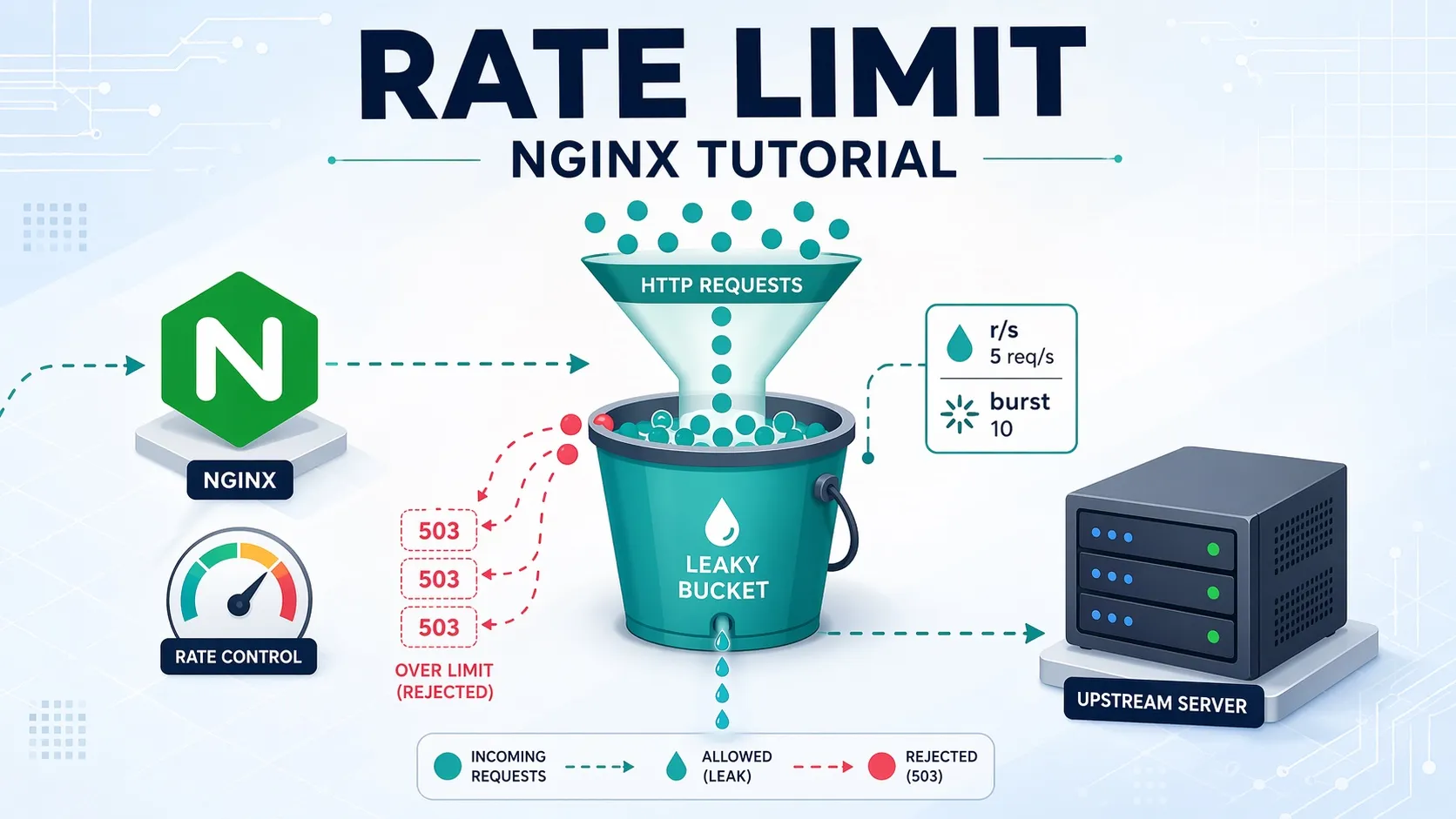

The Leaky Bucket Algorithm

Nginx’s limit_req uses the classic leaky bucket algorithm. Picture a kitchen sink: the faucet might be turned on at any moment (incoming requests), but the drain at the bottom flows at a fixed rate (the backend’s processing speed). When water flows in slower than it drains, the sink stays empty and everything is fine. When a sudden flood arrives, water rises in the sink — but as long as it hasn’t overflowed, you’re still OK. Once the water level passes the rim, the excess just spills onto the floor (rejected requests).

Mapping back to Nginx, the bucket’s drain rate is rate, the bucket’s capacity is burst, and anything over burst overflows and gets a rejection status code. Since Nginx 1.3.15 introduced the limit_req_status directive, the default has always been 503 (Service Unavailable). You can change it to 429 (Too Many Requests) with limit_req_status 429;. Semantically 429 is more accurate and avoids confusion with real server failures, so modern services almost always switch. If you observe 429 out of the box, something upstream — your config, or a Cloudflare-style proxy rewriting the status — has already made the change. nginx -T | grep limit_req_status will tell you. The big win of the leaky bucket model is predictability: no matter how much traffic shows up in a short window, the backend never sees more than rate requests per second, so it can’t get crushed.

The Two Directives: limit_req_zone and limit_req

Nginx splits rate limiting into two directives. limit_req_zone defines what a bucket looks like, and limit_req applies that bucket at a specific location. The split lets you share one zone across many location blocks, or apply different zones at different paths.

# Define the zone (must live in the http {} block)

limit_req_zone $binary_remote_addr zone=mylimit:10m rate=10r/s;

server {

location /api/ {

# Apply the zone (server or location block)

limit_req zone=mylimit burst=20 nodelay;

proxy_pass http://backend;

}

}Short, but every field matters. The next sections walk through limit_req_zone’s three fields and limit_req’s parameters one at a time.

key: what identifies a request source

The first argument to limit_req_zone is the key — the unit you count quota against. The most common choice is $binary_remote_addr, the client IP. Why $binary_remote_addr instead of $remote_addr? The binary form is 4 bytes (IPv4) or 16 bytes (IPv6); the string form is 7–15 bytes for IPv4 alone. The difference shows up in shared-memory usage, and it adds up once you’re tracking many IPs.

Other keys are valid too. Common choices:

$binary_remote_addr— per client IP (most common)$server_name— per virtual host, so the whole domain shares one quota$http_x_api_key— per API key (for header-authenticated services)$cookie_session_id— per logged-in session- Empty string

""— no key, the entire endpoint shares one global quota (useful for protecting expensive endpoints)

Watch out: if Nginx sits behind another reverse proxy or CDN (Cloudflare, AWS ALB, etc.), $binary_remote_addr will be the proxy’s IP and all traffic counts as the same source. In that case you need $http_x_forwarded_for or $realip_remote_addr, plus a properly configured real_ip module to whitelist trusted upstreams — otherwise the field is trivially spoofable.

zone: the shared memory area

zone=mylimit:10m defines a shared-memory region named mylimit that’s 10 MB in size. Nginx’s worker processes use this region to track each key’s current bucket level and last-update timestamp.

How many keys fit in 10 MB? The official docs say roughly 16,000 IPv4 states per MB, so 10 MB tracks about 160,000 unique IPs. That’s plenty for most small-to-medium sites; large sites bump it up to 32 MB or 64 MB. If the zone fills up, Nginx returns 503 and logs a message — that’s your signal to grow it.

rate: the average speed

rate=10r/s means at most 10 requests per second per key. Nginx also accepts r/m (per minute), e.g. rate=30r/m. The catch: internally Nginx converts this to a minimum interval between requests. 10r/s means one every 100 ms; 30r/m means one every 2 seconds. So 30r/m is not “30 requests in any one-minute burst” — it’s “one request every 2 seconds.” If you want burst behavior, that’s what the burst parameter is for.

burst: allowing short-term bursts

burst is the bucket’s capacity — how many requests can queue up beyond the steady rate. With no burst (capacity 0), anything faster than rate is rejected immediately. In practice, traffic is rarely uniformly distributed: a single page load can fire 5–10 requests in an instant (HTML, CSS, JS, images, API calls), all counted against the quota. With burst=0, you’ll throttle real users.

A reasonable default is 2–5× the rate. Bigger burst tolerates spikes better, but also lets attackers pack more requests in before getting rejected.

nodelay: don’t artificially slow burst requests

With burst alone (no nodelay), requests above rate get queued and processed at the configured rate. With rate=2r/s burst=4, when 5 requests arrive simultaneously the first runs immediately and the rest go out at 0.5 s, 1.0 s, 1.5 s, and 2.0 s. From the user’s perspective, the later requests have artificially extended response times.

Add nodelay and the behavior changes: the burst bucket processes everything immediately, but the bucket level doesn’t reset to zero. Instead it refills at the steady rate. So users don’t see latency on the burst, but if they fire more requests in the next few seconds they’ll be rejected (the bucket hasn’t refilled yet). This is the friendliest behavior for real users and the default choice for most web apps.

The three modes are easier to grasp visually:

Modes ② and ③ accept the same total number of requests (5 succeed, 1 returns 503) — they only differ in when. Mode ② smooths the load on the backend but adds visible latency. Mode ③ lets all 5 burst hit the backend instantly, but the user experience is best. If your backend can absorb a brief spike, nodelay is almost always the right call.

delay: the hybrid mode

Nginx 1.15.7 added the delay parameter, which gives you a hybrid: “first N processed immediately, the rest queued.” For example, burst=10 delay=5 lets the first 5 requests through instantly (like nodelay), queues requests 6–10 with delay, and rejects the 11th and beyond with 503. Useful when you want some elasticity for real users but don’t want the entire burst to land on the backend at once.

Real-World Configuration Examples

Basic: protecting the login page

The login page is the classic brute-force target, and the quota can be aggressive — real users don’t log in dozens of times per second. Here’s a config that allows 1 attempt per second per IP, with a burst of 5 to accommodate fast retries:

http {

# A zone called "login", 1 request per second

limit_req_zone $binary_remote_addr zone=login:10m rate=1r/s;

# Use 429 (Too Many Requests) instead of the default 503 — semantically clearer

limit_req_status 429;

server {

location = /login {

limit_req zone=login burst=5 nodelay;

proxy_pass http://backend;

}

}

}Tiered: general API vs. heavy endpoints

Large API services often grade their endpoints. General queries get a generous quota, while expensive endpoints (search, reports) get a tight one. By defining multiple zones and applying them in different location blocks, you can do tiered limiting:

http {

# General API: 20 requests per second

limit_req_zone $binary_remote_addr zone=api_general:10m rate=20r/s;

# Heavy endpoints: 2 requests per second

limit_req_zone $binary_remote_addr zone=api_heavy:10m rate=2r/s;

server {

# Default: general limit

location /api/ {

limit_req zone=api_general burst=40 nodelay;

proxy_pass http://backend;

}

# Search: stack the heavy limit on top of the general one

location /api/search {

limit_req zone=api_general burst=40 nodelay;

limit_req zone=api_heavy burst=5 nodelay;

proxy_pass http://backend;

}

}

}Multiple limit_req directives in the same location each create an independent bucket, and the request must pass all of them to be allowed through. So /api/search is constrained both by the global API quota and by its own heavy-endpoint quota.

Behind Cloudflare (orange-cloud proxy)

If your site is on Cloudflare’s orange-cloud (proxy mode), every request hits a Cloudflare edge node first and is then forwarded to your origin. Nginx’s $remote_addr becomes the edge node’s IP (something like 173.245.x.x or 104.16.x.x). Use $binary_remote_addr as the rate-limit key in this state and bad things happen — every user on Earth is bucketed together, and you’ll throttle the entire site within seconds. The flow looks like this:

Cloudflare puts the real client IP in one of three headers:

CF-Connecting-IP— Cloudflare’s own header, single IP, available on all plans. This is the recommended choice.X-Forwarded-For— the standard header, but it can contain multiple IPs and the client can spoof it (you needreal_ip_recursive onto walk back to the closest trusted IP).True-Client-IP— Cloudflare Enterprise only, behaves likeCF-Connecting-IP.

The right approach is Nginx’s built-in ngx_http_realip_module (compiled into most distros’ Nginx by default). You tell Nginx: “trust requests from these CIDR ranges; for those, replace $remote_addr with the value in this header.” Full config:

http {

# === Cloudflare IPv4 ranges ===

set_real_ip_from 173.245.48.0/20;

set_real_ip_from 103.21.244.0/22;

set_real_ip_from 103.22.200.0/22;

set_real_ip_from 103.31.4.0/22;

set_real_ip_from 141.101.64.0/18;

set_real_ip_from 108.162.192.0/18;

set_real_ip_from 190.93.240.0/20;

set_real_ip_from 188.114.96.0/20;

set_real_ip_from 197.234.240.0/22;

set_real_ip_from 198.41.128.0/17;

set_real_ip_from 162.158.0.0/15;

set_real_ip_from 104.16.0.0/13;

set_real_ip_from 104.24.0.0/14;

set_real_ip_from 172.64.0.0/13;

set_real_ip_from 131.0.72.0/22;

# === Cloudflare IPv6 ranges ===

set_real_ip_from 2400:cb00::/32;

set_real_ip_from 2606:4700::/32;

set_real_ip_from 2803:f800::/32;

set_real_ip_from 2405:b500::/32;

set_real_ip_from 2405:8100::/32;

set_real_ip_from 2a06:98c0::/29;

set_real_ip_from 2c0f:f248::/32;

# Pull the real IP from CF-Connecting-IP

real_ip_header CF-Connecting-IP;

# After this, $binary_remote_addr is the actual end-user IP

limit_req_zone $binary_remote_addr zone=mylimit:10m rate=10r/s;

}Critical: explicitly list the trusted ranges. Never shortcut to 0.0.0.0/0 (trust everyone). Otherwise an attacker who finds your origin IP (bypassing Cloudflare) and forges CF-Connecting-IP can pretend to be any IP and bypass the rate limit entirely. If you’re worried about origin IP exposure, also restrict ports 80/443 to Cloudflare’s ranges at the firewall layer for defense in depth.

Auto-syncing the Cloudflare IP list

That IP list is long to copy by hand, and Cloudflare adds new ranges occasionally — hard-coding it goes stale. A small script that pulls from Cloudflare’s official endpoint plus a weekly cron does the job:

#!/bin/bash

# /etc/nginx/update-cloudflare-ips.sh

set -e

OUT=/etc/nginx/conf.d/cloudflare-realip.conf

TMP=$(mktemp)

{

echo "# Auto-generated from Cloudflare IP list"

echo "# Updated at $(date -Iseconds)"

curl -fsS https://www.cloudflare.com/ips-v4 | sed 's|^|set_real_ip_from |;s|$|;|'

curl -fsS https://www.cloudflare.com/ips-v6 | sed 's|^|set_real_ip_from |;s|$|;|'

echo "real_ip_header CF-Connecting-IP;"

} > "$TMP"

# Validate the new config; only swap and reload if it parses

mv "$TMP" "$OUT"

nginx -t && nginx -s reloadThe generated file gets included into http {} automatically (via include /etc/nginx/conf.d/*.conf), so the $binary_remote_addr your limit_req_zone reads is the real client IP. Cron entry:

# Sync every Sunday at 03:00

0 3 * * 0 /etc/nginx/update-cloudflare-ips.sh >> /var/log/cf-ip-update.log 2>&1Verifying the real IP actually works

The most-skipped step after configuring real IP is verifying it. The simplest check is to log both $remote_addr (after override) and $http_cf_connecting_ip (the original header) and compare:

log_format cfdebug '$remote_addr | cf=$http_cf_connecting_ip | xff=$http_x_forwarded_for '

'"$request" $status';

server {

access_log /var/log/nginx/access.log cfdebug;

# ...

}Visit the site from a few different networks (mobile data, home Wi-Fi, VPN), then check the log. If $remote_addr matches cf= and equals your actual current IP, the config works. If $remote_addr is still a Cloudflare IP (104.16.x.x, 172.64.x.x, etc.), either the CIDR list doesn’t cover the edge node or the header name is wrong. Once you’ve confirmed it, switch the log format back.

How Cloudflare’s own rate limiting splits responsibility with Nginx

Cloudflare also offers rate limiting (limited on the Free plan, full-featured on paid plans). If Cloudflare already throttles, do you still need the Nginx layer? In practice yes, do both. The split:

- At the Cloudflare layer: large-scale DDoS, bot traffic, domain-wide quotas. The traffic never reaches your origin, saving bandwidth and CPU.

- At the Nginx layer: fine-grained per-endpoint quotas (login, specific APIs, heavy endpoints), and the last line of defense if Cloudflare ever fails open.

One subtlety: Cloudflare does connection coalescing by default — a single edge node opens only a small number of keep-alive connections to your origin and pipes all user requests through them. So if you’re using limit_conn, set it generously, or you’ll throttle Cloudflare itself.

Monitoring and Debugging

Don’t deploy and forget. Watch the Nginx error log to confirm that throttled requests really are abuse and not real users you’re collateral-damaging. Requests blocked by limit_req produce log lines like:

2026/04/20 10:23:45 [error] 1234#0: *5678 limiting requests, excess: 5.123 by zone "mylimit", client: 192.0.2.1, server: example.com, request: "GET /api/users HTTP/1.1"If error level feels too noisy, downgrade with limit_req_log_level warn; or notice. For production, plug a monitoring stack (Prometheus + Grafana, Datadog, cloud LB logs, etc.) into the 4xx/5xx breakdown — once you’ve set limit_req_status 429, you can isolate 429 counts to track how often the rate limit fires.

A common debugging trap: testing with curl from the same machine looks like the limit isn’t working, because 127.0.0.1 requests aren’t always counted as “real” traffic, or you’re just not sending enough requests to trip the threshold. Use a load tool like ab (Apache Bench) or wrk:

# 10 concurrent connections, 100 requests total — watch the 503/429 ratio

ab -n 100 -c 10 https://example.com/api/usersCommon Pitfalls

A few things that catch people in production:

- Setting rate to “the maximum acceptable value” —

rateis the average, not the cap. The cap comes fromburst. If you want “at most 100 per second,” that’srate=100r/s burst=0, notrate=100r/s burst=200 nodelay(the latter can let 201 requests through in an instant). - NAT collateral damage — users behind a corporate or campus NAT share one external IP. Using IP as the key buckets all of them together. With many users on one NAT, switch to a different identifier (session, API key) or loosen the rate.

- Forgetting to exclude static assets — applying rate limiting at the site root counts images, CSS, and JS against the quota too, and normal browsing trips the limit. Either separate

locationblocks for static vs. dynamic, or only apply the limit to API and sensitive paths. - WebSocket and long-lived connections —

limit_reqonly counts requests. After a WebSocket upgrade, the connection isn’t HTTP requests anymore and the rule does nothing for individual messages. For WebSocket protection, uselimit_conn(connection count). - Reload doesn’t reset counters —

nginx -s reloadis a hot reload; the shared-memory zone keeps its state. Newratevalues take effect immediately, but existing buckets keep their current levels. To wipe state completely, you needrestart.

Rate limiting is “easy to configure, hard to tune.” After the initial deploy, watch real traffic for a while and adjust. Start loose (nodelay with a generous burst), confirm there’s no collateral damage, then tighten. Nginx also has limit_req_dry_run for “log only, don’t block” — useful for observing what would get throttled before you commit.